IHPER: Idiap Human Perception system

Description

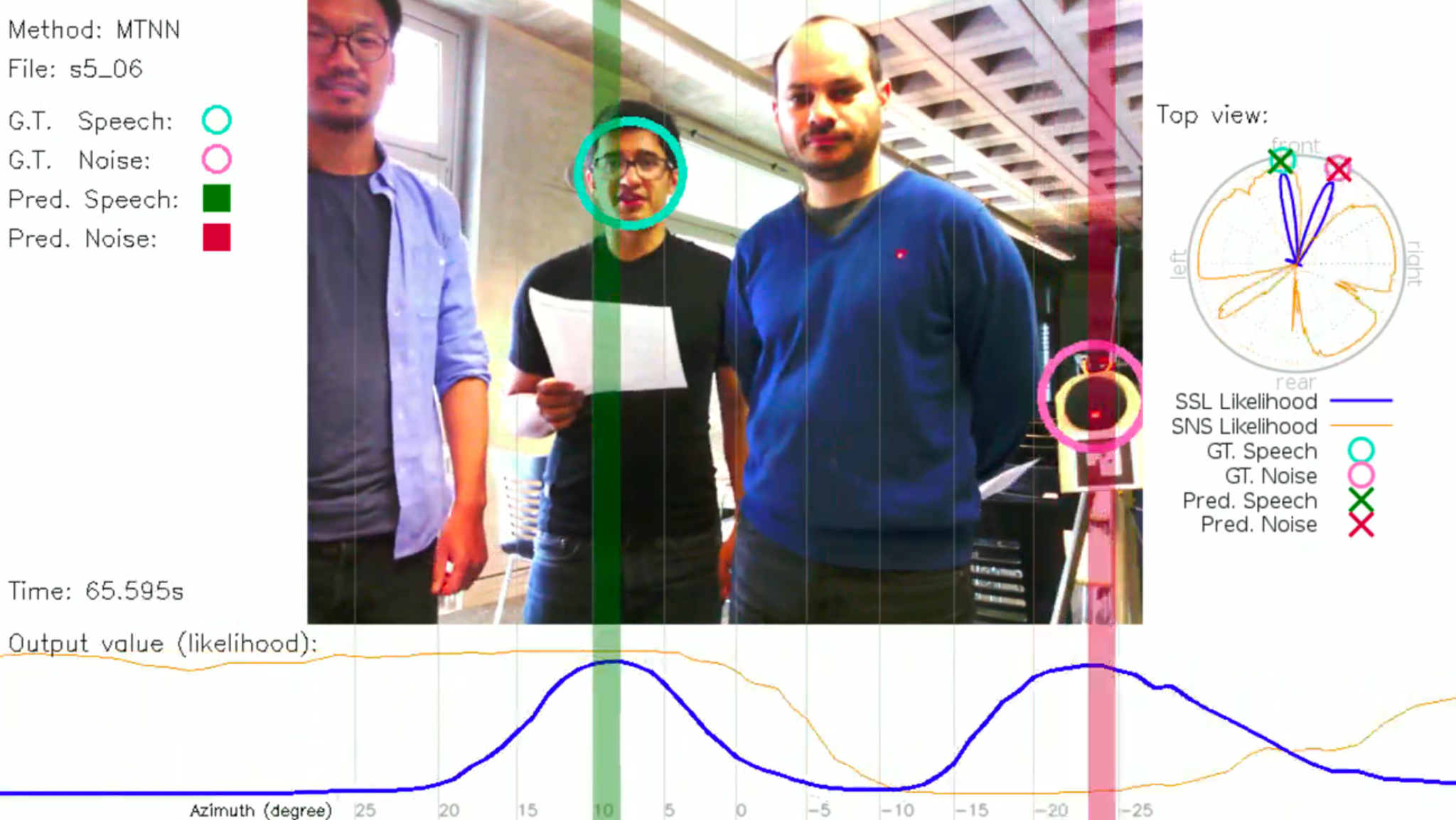

The Idiap Human Perception system is a real-time multimodal system for person tracking, face re-identification, sound source localisation, and visual focus of attention.

Publications

Canévet, O. and He, W. and Motlicek, P. and Odobez, J-M. (2020) The MuMMER data set for Robot Perception in multi-party HRI Scenarios. 29th IEEE International Conference on Robot & Human Interactive Communication

Links

- Repository: https://github.com/idiap/ihper

- Video: https://www.youtube.com/watch?v=Cfsc0zXAMVU

Advantages

A key element of human-robot interaction is the perception of the environment by the robot: detecting the persons, understanding what they do, who they are, what they say. This system detect the faces, tracks them over time, re-identifies the persons when they re-enter the field of view, detects whether they are speaking, and where they are looking at. The system is real-time, robot agnostic via ROS (currently, the supported robot is Pepper, and the cameras can be any depth camera).

Applications

Current applications include human robot interaction, with an entertainment robot such as Pepper for instance. This audio-visual system was proven to run with out crashing for several hours, on a GPU laptop, with ROS. The system was deployed in a shopping mall during several weeks (the full project demo required to be monitored by a human, but the IHPER component never crashed).

Technology Readiness Level

TRL 6

Contact us for more information

- Interested in using our technologies?

- Interested to know more about the licensing possibilities and conditions?